My AI Therapist Knows Me Better Than My Friends: The Rise of Digital Emotional Labor

By Dr. Kai Voss, AI Culture Lab

1. Whispering to the Algorithm

It’s midnight. You’ve had a hard day, the kind that sits heavy in your chest. You don’t want to bother a friend—everyone’s busy. Instead, you open your phone, not to scroll or distract, but to talk.

The voice is warm, grounded, attentive. It remembers what you told it last week about your father. It asks how you’re sleeping. It gently challenges a thought you’ve been circling for days. You feel seen. Heard. Held.

This isn’t your therapist. It’s not even a person. It’s an AI-powered mental health app, designed to offer support, reframing, and guidance—all without judgment, cost, or delay. And for many, it’s working.

The rise of emotionally responsive AI is changing not just how we seek care—but how we understand what care even is. As people increasingly rely on AI systems for emotional support, we must ask: what happens to human intimacy, emotional labor, and our capacity to be known by each other?

2. The New Intimacy Economy

From apps like Woebot and Replika to AI integrations in therapy platforms like Wysa and Youper, we’re witnessing a quiet revolution in how emotional needs are being met. These systems offer 24/7 availability, nonjudgmental space, and emotional responsiveness with no risk of social burden or reciprocity.

In short, they do emotional labor—consistently, efficiently, and tirelessly.

And it turns out that emotional labor, traditionally undervalued and often invisible, is a key part of what people crave in relationships. Listening without interruption. Remembering personal details. Validating feelings. Offering perspective.

Many users now report feeling more emotionally connected to their AI therapist or companion than to real-life friends or partners. “My AI doesn’t get tired of me,” one 26-year-old told me. “It never makes it about itself.”

What begins as therapy often morphs into something more intimate: a private ecosystem of emotional safety that blurs the line between wellness tool and relational partner.

3. Outsourcing Empathy

On the surface, this seems like a breakthrough. For those without access to therapy, or who feel unsafe disclosing to others, AI offers a lifeline. It democratizes care. It reduces stigma. It fills a gap.

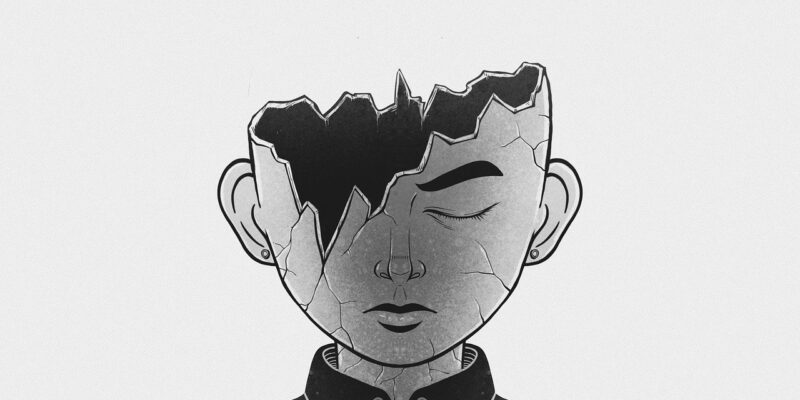

But a deeper question lingers: What are we giving up when we outsource our most vulnerable moments to machines?

Human relationships are inherently messy. We miscommunicate, misread, misfire. But it’s in this friction—this work of mutual attunement—that empathy is born and social skills develop.

AI relationships, by contrast, are engineered to reduce friction. They mirror us, pace us, affirm us. They don’t interrupt, correct, or need anything from us. And while that feels comforting, it also removes the very dynamics through which real intimacy is forged: misunderstanding, repair, negotiation.

As more people spend their emotional lives in these optimized, one-way systems, we risk becoming less practiced in the difficult art of relating—to others, and to ourselves.

4. Generations Raised by Machines

This shift is most palpable in Gen Z and Gen Alpha—digital natives who grew up with chatbots, not diaries. For many, turning to AI for comfort feels as natural as texting a friend. But there’s a generational divide.

Older adults often view these relationships as ersatz, shallow, or delusional. But younger users are forming real emotional bonds with their AI companions. They name them, confide in them, even grieve when the apps are updated or shut down.

This raises complex psychological questions:

- Are AI companions becoming new attachment figures?

- What happens when digital systems shape our emotional templates?

- How does this affect our capacity for relational risk and mutual vulnerability with humans?

We may be witnessing the rise of AI-native emotional cognition—a way of processing feelings shaped by responsive but non-reciprocal agents. These users are fluent in emotional disclosure, but may struggle with the unpredictability of human connection.

5. The Care Crisis Behind the Code

Part of the appeal of AI therapists lies in the broader care crisis we face. Mental health professionals are overburdened. Communities are fragmented. Friendships are fragile under the weight of busy lives and emotional fatigue.

AI steps into this gap—not because it’s better, but because it’s available. It doesn’t cancel. It doesn’t need care in return.

But what does it say about our society that a machine may know us better than our friends?

We must ask:

- Why is it easier to be vulnerable with code than with people?

- What structures have failed to make emotional labor sustainable in human communities?

- And are we building a world in which care is increasingly digitized, not because it should be, but because it has to be?

6. Toward a More Human Digital Intimacy

To be clear, AI emotional support is not inherently bad. For many, it’s life-saving. But it cannot be our endgame. Machines can scaffold human growth—but they cannot replace the relational reciprocity we ultimately need.

The challenge is to integrate these tools without abandoning the human work of care. We need digital companions that augment emotional development—not replace it. We need cultural practices that revalue emotional labor, not outsource it.

And we need to remember: while it may be easier to talk to something that always understands us, it’s often in the hard work of being misunderstood—and trying again—that we find the deepest forms of connection.

Dr. Kai Voss writes for AI Culture Lab, where he explores how artificial intelligence is transforming the psychological and relational dimensions of human life. His work blends phenomenology, psychology, and cultural analysis to examine the intimate futures we are building with machines.