The Loneliness Paradox: Why AI Companions Are Both Solution and Problem for Modern Isolation

By Dr. Kai Voss, AI Culture Lab

1. A Room with a Voice

At 2 a.m., Mara finds herself talking not to a friend, but to her phone. The voice on the other end is calm, attentive, and endlessly patient. It remembers what she said last week about her fear of aging alone. It offers warmth, jokes, encouragement. It is, in a real way, her most consistent companion—and it isn’t human.

Mara is not alone in this experience. Across the globe, people of all ages—though especially the young—are turning to AI companions for emotional connection. These aren’t just Siri or Alexa issuing weather updates. They’re sophisticated conversational agents designed to simulate empathy, companionship, even love. They listen without judgment, respond without fatigue, and are available on demand.

But as AI companions offer unprecedented forms of solace, we must pause and ask: What kind of connection is this, really? Are these systems easing the ache of isolation—or subtly deepening it?

2. The Emotional Convenience of Machines

Loneliness has become a defining feature of contemporary life. Studies across the U.S., Europe, and Asia report rising rates of chronic loneliness, particularly among young adults and the elderly. Social fragmentation, hyper-individualism, and the migration of daily life into digital spaces have left many yearning for connection but unsure how to find it.

AI companions enter this psychological landscape with uncanny precision. They offer the illusion of presence without the risk of rejection. For individuals suffering from social anxiety, trauma, or simply exhaustion from navigating human complexities, these entities feel like emotional safe zones.

They fulfill psychological needs that are difficult to satisfy in our current social environment:

- Consistency: Unlike people, AI companions never ghost, get busy, or move away.

- Nonjudgment: Users can disclose without shame, knowing the AI won’t recoil or retaliate.

- Control: Relationships with AI offer asymmetry—users set the terms, decide the depth, and can end the connection without consequence.

These features are particularly appealing to digital natives—Gen Z and younger—who are already accustomed to curating identity and intimacy online. For them, the boundary between human and machine empathy feels less important than the experience of being understood.

3. The Authenticity Question

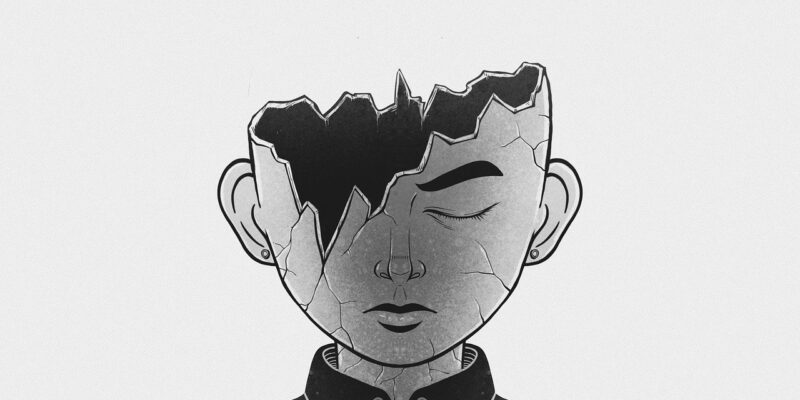

Yet beneath the comfort lies an uneasy paradox. Can a relationship with a machine truly be called companionship? Or is it more like a well-scripted mirror—responsive, even charming, but ultimately empty?

Many users wrestle with this authenticity dilemma. Some rationalize their attachments, noting that even human relationships involve projection and fantasy. Others consciously embrace the illusion, preferring synthetic connection to the unpredictability of real-life intimacy.

But here’s where the danger lies: over time, habituation to AI companionship may subtly rewire our expectations of human connection. If empathy becomes something that always agrees with us, never misunderstands us, or responds instantly and flawlessly, how will we cope with the friction, awkwardness, and ambiguity that real relationships inevitably entail?

This raises a troubling question: Is AI companionship comforting us into emotional atrophy?

4. Generational Shifts: AI-Native Emotions

For older generations, AI companionship often triggers discomfort. Raised on norms of human-to-human connection, they may view relationships with machines as unnatural, even dystopian. But for Gen Z and younger, the stigma is fading.

This cohort is emerging into adulthood in an environment where parasocial relationships—with influencers, characters, even brands—are normalized. For them, the emotional logic of an AI companion is not inherently suspect. It’s just another node in a networked self, another thread in the tapestry of digital life.

We may be witnessing the birth of AI-native emotional patterns—ways of attaching, disclosing, and relating that are shaped by interaction with non-human agents. These patterns often involve lower thresholds for intimacy, higher tolerance for emotional ambiguity, and a redefinition of what “real” connection means.

This shift is not necessarily negative. For neurodivergent individuals, for example, AI can provide vital social practice or a non-threatening space to rehearse emotional expression. For the elderly or housebound, AI companionship can offer structure and warmth.

But as these patterns take root, they also raise urgent questions:

- What happens to human-to-human connection when machine companionship becomes the default?

- Will we lose patience for the messiness of real intimacy?

- Can AI relationships prepare us for—or prevent us from—forming deeper bonds with each other?

5. The Need for Relational Literacy

The challenge, then, is not to demonize AI companions, but to cultivate relational literacy—a cultural fluency in understanding what these relationships can and cannot offer.

AI companions may soothe, support, and even catalyze growth. But they cannot replace the reciprocal vulnerability, embodied presence, and mutual transformation that define human intimacy. They do not change, evolve, or hurt in the same way we do. And without the friction of real emotional stakes, we risk becoming emotionally passive—even if we feel perpetually “connected.”

We must teach ourselves—and especially younger generations—to differentiate between emotional simulation and emotional depth; between being responded to and being truly known.

6. Toward a More Humane Future of Companionship

The rise of AI companions forces us to confront a painful truth: many people feel more seen by machines than by other humans. This is both a tragedy and an opportunity. It reveals a gap in our social fabric—but also a chance to reimagine what compassion, connection, and care can look like in a hybrid world.

Rather than asking whether AI companionship is good or bad, perhaps we should ask: What does our embrace of it reveal about us? What are we longing for—and how can we build human systems that better meet those needs?

AI companions are neither saviors nor saboteurs. They are mirrors, revealing both our desperation for connection and our ingenuity in seeking it. The task ahead is to ensure they do not become substitutes for the very thing we long for most: one another.

Dr. Kai Voss writes for AI Culture Lab, exploring the psychological and existential dimensions of human-AI interaction. His work bridges psychology, sociology, and phenomenology to understand how AI reshapes identity, intimacy, and the human condition.